The UK tech sector continues to boom despite associated fears for the industry, linked to Brexit. In 2017 UK firms attracted almost four times as much funding than companies in Germany France, Ireland and Sweden combined. And it is no surprise that London accounted for around 80 of that funding with tech entrepreneurs and businesses being attracted by the Capital’s talent pool and lead on cutting-edge technologies, such as Artificial Intelligence (AI) and Fintech. In fact, close to £3 billion in venture capital funding was secured, according to the Mayor of London’s official promotional agency, London & Partners – almost double the 2016 figure.

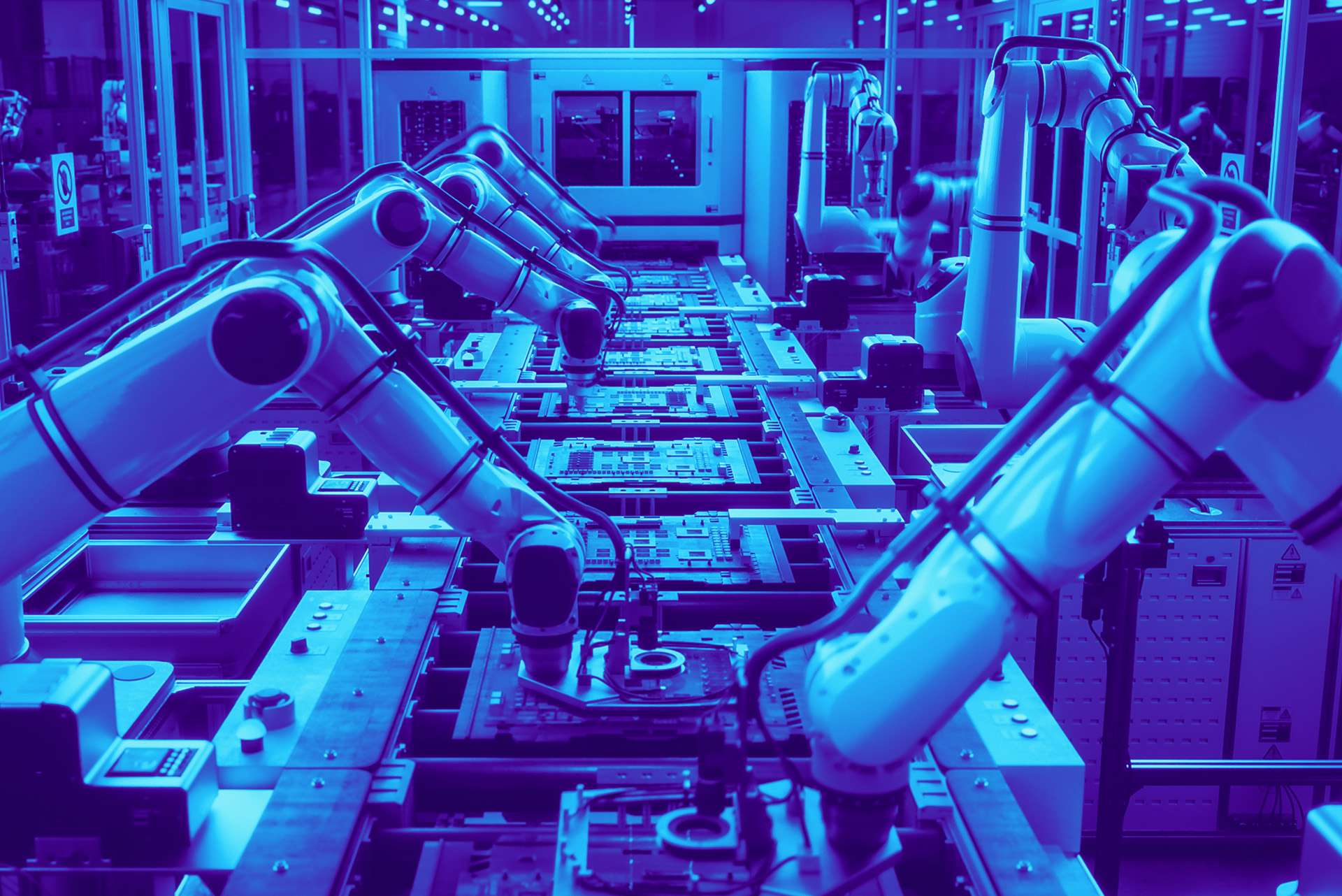

What this perhaps signals is a growing appetite for tech and the demand to use it, not only to streamline operations, but also to push business forward. Emerging tech trends are in fact set to transform what organisations offer. According to the industry experts, the acceleration of AI, bitcoin, augmented reality (AR) and blockchain, will continue to make an impact on business this year. But we are likely to see the biggest impact in the Internet of Things (IoT) sector, which has been named the ‘fourth industrial revolution’. IoT has already transformed manufacturing and production and, according to Steve Jones, principal consultant at cloud company Amido, “the industrial IoT will enable data-driven manufacturing and will introduce huge productivity boosts to industry”.

We’re also likely to see a big shake-up in the boardroom with the CIO and CTO driving innovation and digital transformation. While traditionally the CIO role has been seen to be averse to new technology, Phil Lander, head of B2B at Samsung Europe, states that it is evolving and CIO’s are now asking how technology can better benefit their entire business.

But it’s not all positive; tech companies can expect more regulation this year, particularly in the US with the introduction of the Honest Ads Act that will force tech giants to make digital ad data public in national elections. But the law would cover much more than political ads, including looking into whether or not tech companies intentionally make products addictive and address concerns around privacy.

Still, it’s clear that the tech industry has real momentum and will continue to grow in 2018. The UK government is investing an extra £21 million into Tech City UK over four years; Facebook opened a new office in London, pledging to create 800 new jobs; Google is planning a £1bn London based headquarters and Amazon has opened a centre in Cambridge dedicated to research for products, moved its HQ to East London and announced 1,000 new jobs in Bristol.

But it is not just the pure tech players that are thriving; as mentioned, growth in the key tech verticals has also been on the rise. So, moving forward into the new year, there is a lot of optimism around the sector. With nearly 25 years of experience of working with technology companies, we at Whiteoaks are also excited to see what the sector brings in 2018 – could it be another record breaker?